Autonomous Systems vs. Robots

Two of the most consequential platform categories in the AI-Industrial Complex are frequently conflated: autonomous systems and robots. The distinction is not semantic — it determines how platforms are designed, regulated, supplied, and deployed. Getting it right is the foundation for understanding everything from robotaxi fleet economics to humanoid actuator supply chains.

The Core Distinction

An autonomous system is a traditional machine — a vehicle, vessel, aircraft, or piece of heavy equipment — where autonomy technology replaces or augments the human operator. The machine's primary identity and mechanical architecture predate the autonomy stack. A self-driving truck is still a truck. An autonomous excavator is still an excavator. Autonomy is layered on top of a platform whose form factor was defined by its original human-operated function.

A robot is an embodied machine whose core identity is being a robot. It was designed from the ground up as an autonomous or semi-autonomous physical agent — not as a human-operated machine with autonomy added later. A humanoid robot is not a person with autonomy software. A quadruped is not a vehicle with legs. The mechanical architecture, actuator stack, compute profile, and operational framework are all defined by the robot's identity as an independent physical agent.

Autonomous System: A traditional machine category (vehicle, vessel, aircraft, equipment) in which human operation is replaced or augmented by an autonomy stack. Form factor and mechanical architecture are inherited from the pre-autonomous platform.

Robot: An embodied machine designed from first principles as a physical agent, capable of perceiving its environment, making decisions, and executing actions with varying degrees of independence from human control.

The practical consequence of this distinction: autonomous systems and robots share enabling technologies — sensors, inference compute, power electronics, batteries — but diverge sharply in actuator architecture, regulatory framework, supply chain dependencies, and operational deployment logic. A robotaxi and a humanoid robot both run NVIDIA compute and LiDAR sensors. Everything below the sensor layer is different.

Where They Overlap and Where They Diverge

| Dimension | Autonomous Systems | Robots |

|---|---|---|

| Origin identity | Traditional machine with autonomy added | Designed as autonomous agent from first principles |

| Form factor driver | Inherited from human-operated predecessor | Defined by task and operational environment |

| Propulsion | Traction motors, EV drivetrain (SiC inverters) | Joint actuators, harmonic drives, GaN servo drives |

| Power electronics | SiC MOSFETs dominant — high voltage, high current | GaN dominant at joint level — high frequency, compact form factor |

| Sensor stack | LiDAR, radar, camera — environment perception at vehicle scale | Above plus proprioceptive sensors, force-torque, tactile arrays at joint/hand scale |

| Inference compute | NVIDIA DRIVE, Qualcomm Ride, Mobileye — driving-domain specific | NVIDIA Jetson/Thor, custom SoCs — manipulation and locomotion domains |

| SAE levels | L1–L5 framework applies directly | SAE levels do not apply — different capability framework required |

| Regulatory framework | NHTSA, FMCSA, FAA, IMO depending on domain | OSHA, ISO 10218, ISO/TS 15066 (cobots) — no unified AV-equivalent framework yet |

| Supply chain divergence | EV supply chain plus sensor/compute overlay | Parallel superset — adds harmonic drives, actuator modules, tactile sensors with no EV equivalent |

| China concentration | High at battery and motor tier | Very High — harmonic reducers, actuator modules, and complete platforms |

Autonomous System Platform Types

Autonomous systems are organized by operational domain — the environment in which the platform operates determines its sensor requirements, regulatory framework, and autonomy stack architecture more than its vehicle type does. A Waymo robotaxi and an autonomous transit bus share more in common technically than a Waymo robotaxi and an autonomous mining truck, despite both being road vehicles.

| Domain | Platform Examples | Primary Autonomy Challenge | Regulatory Body |

|---|---|---|---|

| Road — Urban | Robotaxis, autonomous buses, delivery vans | Unstructured traffic, pedestrians, edge cases | NHTSA, state DMVs |

| Road — Highway/Freight | Autonomous semi-trucks, platooning systems | Long-haul reliability, weather, handoff protocols | FMCSA, NHTSA |

| Off-Highway | Mining trucks, agricultural tractors, construction equipment | Unstructured terrain, GPS-denied environments | MSHA, site-operator governed |

| Marine | Autonomous tugs, survey vessels, cargo barges | Dynamic ocean environment, collision avoidance at scale | IMO, flag state authorities |

| Aviation | Cargo UAVs, eVTOL air taxis, inspection drones | Airspace integration, UTM, weather resilience | FAA, EASA |

Robot Platform Types

Robots are organized by form factor and operational environment. Unlike autonomous systems where the operational domain is the primary organizing principle, robot form factor determines the mechanical architecture, actuator stack, and capability envelope before the deployment context is considered.

| Form Factor | Representative Platforms | Defining Capability | Primary Deployment |

|---|---|---|---|

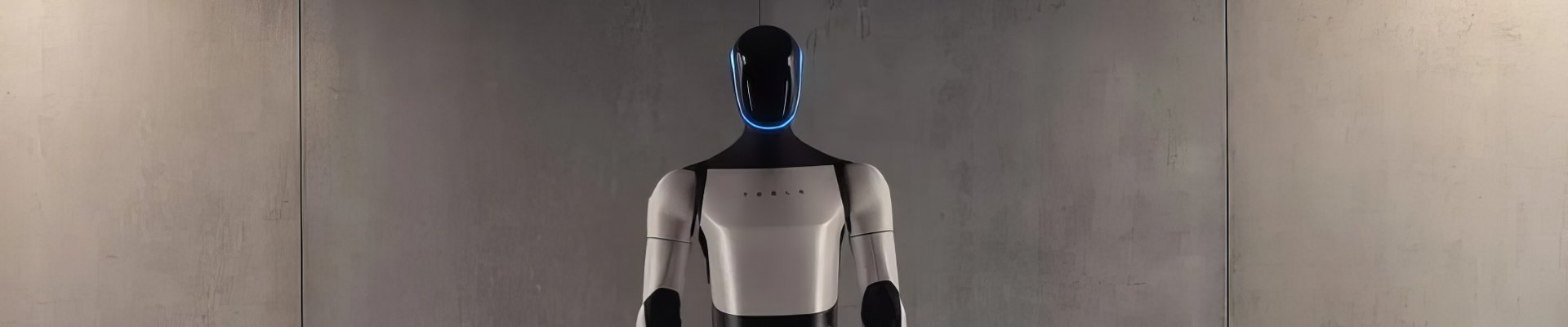

| Humanoid | Tesla Optimus, Figure 02, Agility Digit, Unitree H1 | Human-environment compatibility — designed for spaces and tools built for people | Manufacturing, warehousing, service, eldercare |

| Quadruped | Boston Dynamics Spot, ANYmal, Ghost Robotics Vision 60, Unitree B2 | Terrain traversal — stairs, rubble, unstructured environments | Inspection, security, defense, hazardous environments |

| Industrial | FANUC, KUKA, ABB, Universal Robots UR-series | Precision manipulation at high repeatability and speed | Assembly, welding, painting, pick-and-place |

| Sidewalk Delivery | Starship, Serve Robotics, Coco | Geo-fenced L4 autonomy in pedestrian environments | Last-mile delivery, campus logistics |

| Drones / UAVs | Zipline, Wing, Percepto, DJI Dock series | Aerial access — coverage, speed, vertical deployment | Delivery, inspection, cargo, surveillance |

The Humanoid Opportunity: Scale and Supply Chain

Humanoid robots represent the most consequential emerging supply chain in the electrification ecosystem. At Goldman Sachs projected volumes — a $38 billion market by 2035 with millions of units annually — the semiconductor, actuator, and sensor demand from humanoid platforms alone creates new supply curves that rival the EV traction inverter market in scale and exceed it in complexity.

Each humanoid platform contains an estimated 1,100 to 2,200 semiconductor devices — 400 to 800 of which are power semiconductors. The harmonic drive reducers and strain-wave gearboxes that govern joint precision are currently manufactured at meaningful scale by a handful of Japanese companies (Harmonic Drive Systems, Nabtesco) with Chinese producers scaling rapidly. Tactile sensor arrays — required for dexterous hand manipulation — represent a supply chain that does not yet exist at production scale for any platform.

See: Humanoid Robot Supply Chain · Humanoid Actuator Supply Chain

Robots in the ElectronsX Framework

ElectronsX covers robots as a peer domain to electric vehicles — not a derivative or subset. The Robots node covers platform hardware, form factor, actuator architecture, and supply chain. The Autonomy node covers the software and sensor stack that governs robot behavior. The Fleets node covers the operational and economic framework for deploying robotic systems at scale. These three lenses apply to robotic platforms exactly as they apply to electric vehicles — the same asset, three analytical treatments.