Edge & Local Inference Compute Substrate

Edge and local inference compute is the intelligence substrate of the AI-Industrial Complex — the embedded processing layer that enables electrified and autonomous systems to perceive, decide, and act in real time without round-tripping to a central cloud. It is not an automotive technology or a robotics technology or an energy technology. It is a universal capability layer that appears at every node in the AI-Industrial Complex where a physical system must close a control loop faster than network latency allows.

The transition from cloud-dependent automation to genuinely autonomous operation is, at its core, an inference compute transition. A system that must query a remote server before acting is not autonomous — it is remotely operated. A system with sufficient local inference capacity to perceive its environment, evaluate options, and execute decisions independently is autonomous regardless of its form factor. Edge inference compute is what makes that transition possible across every electrified domain simultaneously.

Why Local Inference — The First Principles Case

Four constraints make local inference mandatory for operational systems — not preferable, mandatory:

Latency. A humanoid robot hand closing around a fragile object, an AV braking for a pedestrian, a microgrid islanding during a grid fault — all require sub-100 millisecond response times. Round-trip cloud latency is measured in hundreds of milliseconds under ideal conditions and seconds under real-world network variability. Cloud inference cannot close safety-critical control loops.

Connectivity. An autonomous mining truck operating in a GPS-denied underground environment, a BESS system managing a grid disturbance during a fiber outage, a humanoid robot in a factory with RF interference — all must operate without reliable network access. Local inference is the only architecture that guarantees operation when connectivity fails.

Bandwidth. A 64-beam LiDAR generates approximately 1.3 million points per second. A humanoid robot with full proprioceptive sensing generates continuous high-frequency joint state data across 30-50 degrees of freedom. Streaming raw sensor data to a cloud for inference is physically impractical at the bandwidth these systems require. Processing must happen where the data is generated.

Data sovereignty and security. Operational technology networks — factory floors, utility infrastructure, port terminals, military logistics — cannot route decision-critical data through public cloud infrastructure. Air-gapped and isolated network environments require fully local inference stacks with no external dependency.

The Inference Compute Continuum

Inference compute exists on a continuum from hyperscale cloud clusters to deeply embedded microcontrollers. ElectronsX covers the right side of this continuum — the deployment layer where inference meets physical systems. DatacentersX covers the left side — hyperscale and on-premise inference infrastructure.

| Tier | Location | Latency Target | Primary Use Cases | Coverage |

|---|---|---|---|---|

| Cloud Training | Hyperscale datacenter | Hours to days | Model training, simulation, fleet-level data aggregation | DatacentersX |

| Cloud Inference | Hyperscale / regional datacenter | 100ms–seconds | Non-real-time analytics, fleet telemetry processing, OTA model updates | DatacentersX |

| Edge Datacenter | Regional / on-premise facility | 10–100ms | Fleet coordination, depot energy management, multi-site operations | DatacentersX / ElectronsX |

| On-Vehicle / On-Robot | Moving platform | 1–50ms | Perception, path planning, motor control, collision avoidance | ElectronsX |

| Infrastructure Node | Fixed facility — depot, microgrid, BESS, FED | 1–100ms | Energy dispatch, grid control, charging orchestration, facility automation | ElectronsX |

| Embedded Controller | Component level — BMS, motor controller, sensor node | <1ms | Cell-level battery management, joint torque control, real-time fault detection | ElectronsX |

Deployment Domain Map

Edge inference compute appears across every domain of the AI-Industrial Complex. The table below maps each primary deployment context to the specific inference function, compute platform class, and why local processing is mandatory rather than optional.

| Domain | Deployment Context | Inference Function | Compute Platform Class | Why Local is Mandatory |

|---|---|---|---|---|

| Autonomous Vehicles | Robotaxi, autonomous truck, autonomous bus | Perception, object detection, path planning, decision execution | NVIDIA DRIVE Orin/Thor, Qualcomm Ride, Mobileye EyeQ | Collision avoidance requires sub-50ms response — cloud latency is incompatible with safety |

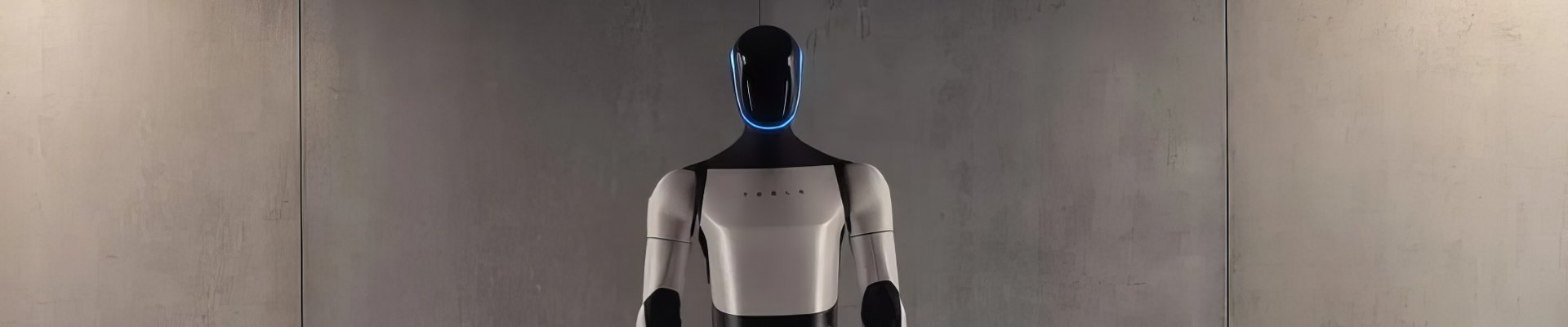

| Humanoid Robots | Factory, warehouse, service deployment | Locomotion control, manipulation planning, object recognition, human interaction | NVIDIA Jetson Thor, custom SoCs (Tesla FSD-derived, Figure AI) | 30–50 DOF real-time control loops require sub-1ms joint feedback — physically impossible via cloud |

| Quadruped Robots | Inspection, security, defense | Terrain adaptation, gait control, obstacle navigation, mission execution | NVIDIA Jetson, custom ARM-based compute | Dynamic balance requires continuous proprioceptive feedback loop — no network dependency tolerated |

| Autonomous eVTOL | Air taxi, cargo UAV, inspection drone | Flight control, airspace awareness, obstacle avoidance, landing execution | Aerospace-grade flight computers, NVIDIA Jetson, custom safety-certified SoCs | FAA DO-178C certification requires deterministic local execution — no cloud dependency in safety-critical flight path |

| Fleet Energy Depot | FED edge compute gateway | Vehicle telemetry processing, charging schedule optimization, energy dispatch, grid interface management | Industrial edge servers, ruggedized x86/ARM compute, NVIDIA Jetson for AI-enhanced dispatch | Depot operations must continue during WAN outages — charging orchestration cannot depend on cloud availability |

| Microgrid Controller | Industrial microgrid, campus microgrid, FED microgrid | DER dispatch, islanding detection, frequency regulation, load forecasting | Real-time controllers (Siemens, Schneider, ABB), embedded ARM, FPGA-based control | Grid islanding decisions require sub-cycle response (<16ms at 60Hz) — cloud round-trip is physically impossible |

| BESS Management | Utility-scale and depot BESS systems | Cell-level SoH modeling, thermal runaway prediction, charge/discharge optimization, fault isolation | Embedded BMS processors, edge AI accelerators for predictive analytics | Thermal runaway propagation occurs in seconds — predictive intervention requires local real-time inference against cell telemetry |

| Grid Edge / DER | Grid edge controllers, smart inverters, DER aggregators | Frequency response, voltage regulation, demand response execution, V2G coordination | Industrial RTUs, embedded ARM processors, FPGA-based grid controllers | FERC Order 2222 frequency response requirements operate at grid timescales — millisecond-level local execution required |

| Industrial Robots | Factory floor — welding, assembly, pick-and-place | Path planning, force control, vision-guided manipulation, collision detection | OEM robot controllers (FANUC, KUKA, ABB), NVIDIA Isaac platform | Deterministic cycle times require microsecond-level control loop execution — network jitter is unacceptable in precision manufacturing |

| Autonomous Off-Highway | Mining trucks, agricultural equipment, construction machinery | Terrain mapping, obstacle detection, haul route optimization, implement control | Ruggedized NVIDIA DRIVE, custom industrial compute, Caterpillar/Komatsu proprietary systems | GPS-denied underground and remote environments make cloud dependency operationally unacceptable |

| Autonomous Maritime | Autonomous tugs, survey vessels, port AGVs | Navigation, collision avoidance, berth management, cargo handling coordination | Marine-certified edge compute, NVIDIA Jetson, custom navigation processors | Maritime connectivity is unreliable — vessels must navigate safely in communication blackout conditions |

| Solid-State Transformers | Grid-edge SST deployments, FED integration points | Power flow control, harmonic compensation, fault detection, bidirectional energy management | Embedded DSPs, FPGA-based real-time controllers, ARM Cortex-M class | SST switching control operates at 10–100kHz — requires deterministic embedded execution with no external latency |

The Power-Intelligence Coupling

Edge inference compute and SiC and GaN power electronics are not independent substrate layers. They are coupled at every deployment node in the AI-Industrial Complex.

Every edge inference deployment is simultaneously a power electronics problem. A humanoid robot running a 30W inference SoC requires a GaN point-of-load converter stepping 48V bus voltage down to sub-1V at MHz switching frequency — within the robot's physical and thermal envelope. A Fleet Energy Depot edge compute gateway requires isolated, conditioned power derived from the depot's SiC-based power management architecture. A BESS management processor requires isolation from the high-voltage battery bus through SiC-based isolation stages.

The two universal substrates define every autonomous node in the AI-Industrial Complex:

- Power substrate SiC and GaN transform, regulate, and route energy to every subsystem. Without power electronics nodes there is no energy delivery to inference compute.

- Intelligence substrate Edge inference compute perceives, decides, and acts on the physical environment. Without local inference there is no autonomy — only automation.

A system with power electronics but no inference compute is electrified but not autonomous. A system with inference compute but no power electronics cannot function. Both substrates are required simultaneously at every autonomous node. This coupling is why the AI-Industrial Complex is a unified system rather than a collection of separate markets.

See also: SiC & GaN: The Universal Power Substrate

Compute Platform Landscape

The edge inference compute market is organized around three distinct platform categories serving different deployment requirements. The chip architecture and supply chain upstream of these platforms is covered on SemiconductorX under AI Accelerators and Edge AI compute.

| Platform Category | Key Platforms | Primary Deployment | Performance Class | Key Characteristic |

|---|---|---|---|---|

| Automotive-Grade SoC | NVIDIA DRIVE Orin (254 TOPS), DRIVE Thor (2,000 TOPS), Qualcomm Ride, Mobileye EyeQ 6 | L2+ through L4 autonomous vehicles | 100–2,000 TOPS | ASIL-D functional safety certification, automotive temperature range, OTA updateable |

| Robotics Compute Module | NVIDIA Jetson Orin (275 TOPS), Jetson Thor, custom SoCs (Tesla, Figure AI, 1X Technologies) | Humanoids, quadrupeds, industrial robots, drones | 10–275 TOPS | Compact form factor, power efficiency critical, Isaac ROS ecosystem |

| Industrial Edge Server | NVIDIA IGX Orin, Dell Edge Gateway, Siemens SIMATIC IPC, Advantech ruggedized systems | FED gateways, microgrid controllers, factory AI nodes, port and depot operations | Variable — CPU + GPU configurations | DIN-rail or rack mount, wide temperature, OT network integration, long lifecycle |

| Real-Time Controller | Siemens SIMATIC S7, Schneider Modicon, ABB AC500, National Instruments CompactRIO | Microgrid control, BESS management, grid edge, motor drives | Deterministic microsecond-class cycle times | Hard real-time OS, IEC 61131-3 programming, functional safety certification, decades-long deployment lifecycle |

| Embedded MCU/DSP | TI TMS320 DSP series, STM32 ARM Cortex-M, NXP S32 automotive MCU, Infineon AURIX | BMS, motor controllers, gate drivers, sensor fusion nodes, safety monitors | MHz-class, deterministic sub-millisecond | Ultra-low power, deeply embedded, ASIL-B/D safety, produced in billions of units annually |

Edge Inference and the Six Autonomy Framework

Edge inference compute is the enabling technology for Operational Autonomy — the sixth and final layer of the Six Autonomy Framework. Operational Autonomy is defined as freedom from human physical presence dependency — the ability of a system to execute its mission continuously without requiring human intervention in the operational loop.

That capability is impossible without sufficient local inference capacity. A system that cannot perceive and decide locally cannot operate without human oversight. The progression from FA-0 (fully human-dependent) to FA-3 (fully operationally autonomous) maps directly onto the inference compute architecture deployed at each level — from no onboard inference at FA-0 to full onboard perception-decision-action closure at FA-3.

Data Autonomy — the fifth framework layer — is the prerequisite. A system that depends on centrally hosted AI models for its inference capability has a rented intelligence architecture. Genuine operational autonomy requires that the inference models themselves be locally deployed, locally updated via OTA, and locally executable without external model access. Edge inference compute is the hardware substrate that makes Data Autonomy physically realizable.

See also: Operational Autonomy · Data Autonomy · Six Autonomy Framework

The Fleet Energy Depot Intelligence Layer

The Fleet Energy Depot is the deployment context where edge inference compute has the most direct operational impact on electrified fleet economics. The FED edge compute gateway is the intelligence node that transforms a charging depot into an energy-intelligent operational platform.

At a fully instrumented FED, the edge compute gateway processes incoming vehicle telemetry — state of charge, battery health, predicted return time, energy consumed per route — and uses that data to optimize charging schedules, dispatch BESS charge and discharge cycles, manage grid interface transactions including V2G, and coordinate with the microgrid controller for energy autonomy operations. None of these functions can tolerate cloud latency or cloud dependency — they operate on the depot's internal OT network with the edge compute gateway as the primary intelligence node.

The FED edge compute architecture bridges three domains simultaneously: fleet operations (vehicle telemetry and charging orchestration), energy management (BESS dispatch and grid interface), and facility automation (yard management, autonomous charging, security). This convergence makes the FED edge compute gateway one of the most complex embedded intelligence deployments in the electrification ecosystem — and one of the least discussed in public technical literature.

See also: Fleet Energy Depot Overview · FED Edge Compute System · Energy Autonomy

The EX–SX–DX Boundary

Edge inference compute is a three-way boundary node across the SiliconPlans network — the only content domain that connects all three primary technical sites simultaneously.

ElectronsX covers the application deployment layer — where inference compute is installed, what it does in each electrified and autonomous system, and why local processing is operationally mandatory. This page.

SemiconductorX covers the substrate and chip architecture layer — GPU and accelerator design, inference-optimized silicon (transformer engines, tensor cores, sparse inference architectures), advanced packaging for inference modules (CoWoS, HBM integration), and the competitive landscape of inference chip producers from NVIDIA and Qualcomm to custom silicon at Tesla, Apple, and Amazon.

DatacentersX covers the infrastructure layer — hyperscale and on-premise inference clusters, the AI factory architecture, inference workload optimization, and the energy and cooling infrastructure that hyperscale inference requires.

The three sites cover three non-overlapping analytical layers of the same capability. A fleet operator or autonomy engineer reading this page needs ElectronsX for deployment context, SemiconductorX for chip architecture and sourcing intelligence, and DatacentersX for the cloud training infrastructure that produces the models their edge systems run on.